What are AI Agents?

An artificial intelligence (AI) agent refers to a system or program capable of autonomously performing tasks on behalf of a user or another system by designing its workflow and utilizing available tools. Autonomous AI agents can understand and interpret customers’ questions using natural language and translate them into business solutions.

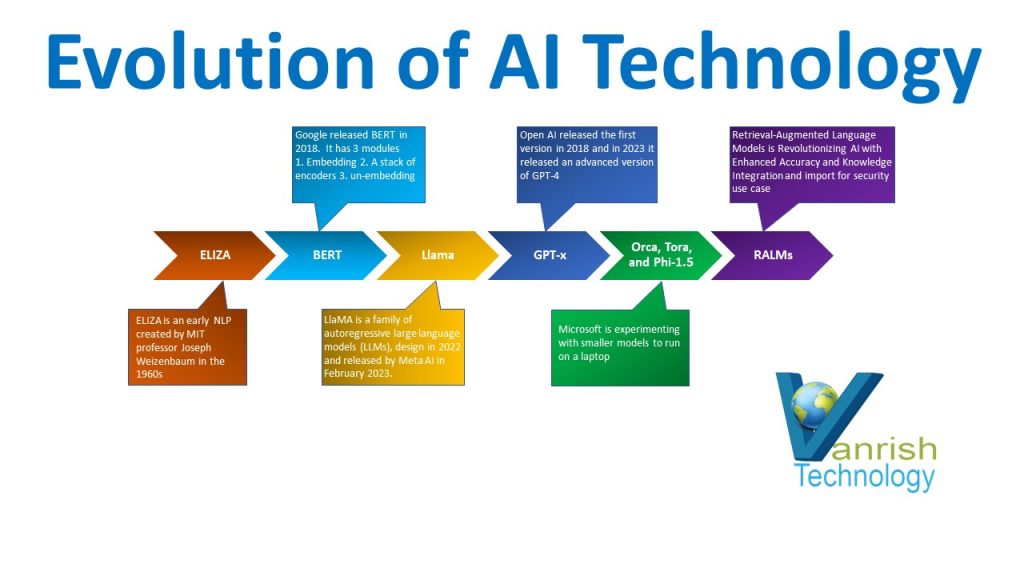

AI journey

In recent years AI has gained a lot of momentum. Predictive analytics make the first wave of AI. Industries entered into 2nd wave of AI as generative AI. Now we are entering into 3rd wave of AI-autonomous agents. AI autonomous agents are creating a new horizon of AI implementation and AI strategy. AI autonomous agents are creating a paradigm shift that will transform how we execute our tasks and business processes daily.

How do AI agents work?

AI agents are autonomous in their decision-making process, but it require goals and environments defined by humans. Here are a few steps to define an AI agent’s goals.

- Data preparation and data collection — AI agents start with gathering data from all sources including customer data, transaction data, and social media. These data help to understand context and user-defined goals for AI agents.

- Decision-making – AI agents analyze the collected data based on machine learning models to identify patterns and decision-making.

- Action execution – Once a decision is made, AI agents can execute the business actions. This action includes customer queries, processing documents, executing any process, or any complex user flow.

- Learning and Adoption – AI agents continuously learn from each interaction, refining algorithms to improve accuracy and effectiveness. AI agents keep updating their knowledge base and enhancing their models.

How are AI agents helping organizations?

- Agents become building blocks that will engage with data and services on your behalf.

- Developers will be freed from repetitive coding tasks as AI agents get this work done.

- The organization will monitor and secure a network of agents in a single-agent control plane.

How AI agents will be enabling AI integration?

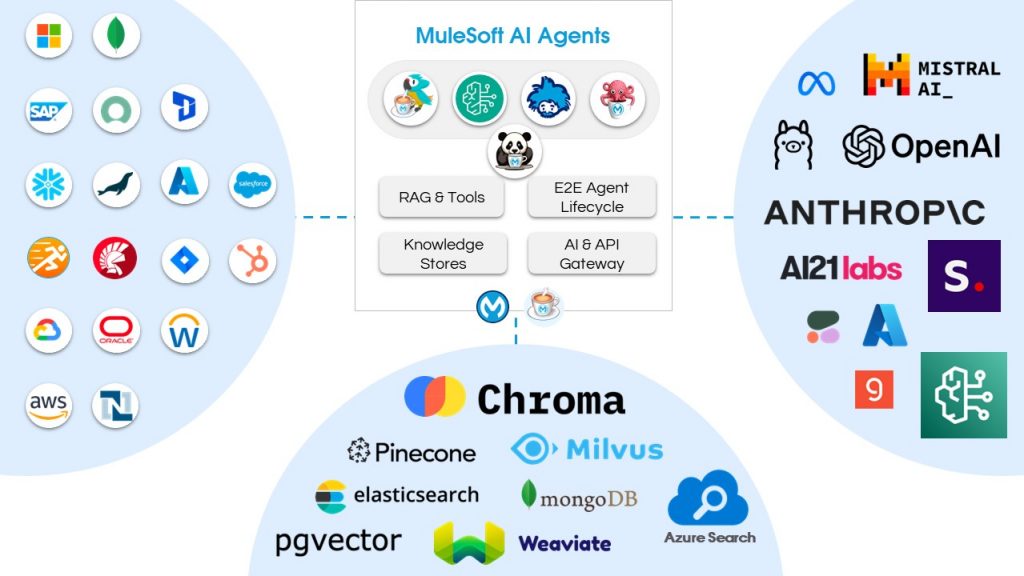

An AI agents provide an AI unification layer which enables your integration with AI LLMs. This feature is categorized into 3 ways.

Easy: Almost no-code development and leveraging existing skills.

Flexible: It enables you to connect multiple LLMS and switch at any time into any model. It also allows us to connect multiple databases and leverage AI innovation as they arrive.

Manageable: Deploy your AI building blocks anywhere and secure these building blocks. Easy to control from one place and reduce operating cost.

AI autonomous agents in MuleSoft

The MuleSoft Solution Engineering Team is working on an open-source AI agents project as MAC(MuleSoft AI Chain). This powerful AI agent tool can connect multiple LLMs and models to provide a unification layer for LLMs. MAC connector enables speech-to-text and text-to-speech for multiple LLMs/model providers. MAC connector leverages existing MuleSoft skills and API knowledge to integrate with any client systems. You can secure and manage this AI agent through API Manager.

Types of AI agents

Scheduled — Run in a defined window and are completely autonomous

Composed — Agents that can be triggered via APIs to be used, e.g., on a portal, as part of integrations, data assessment

Event-Driven — Agents that can be triggered on Events to service distributed applications and consumers.

Batched — Agents that process a large set of data and distribute it intelligently to multiple consumers.

Please reach out to us if you would like to know more about AI agent and integration with your systems.

Rajnish Kumar, the CTO of Vanrish Technology, brings over 25 years of experience across various industries and technologies. He has been recognized with the “AI Advocate and MuleSoft Community Influencer Award” from the Salesforce/MuleSoft Community, showcasing his dedication to advancing technology. Rajnish is actively involved as a MuleSoft Mentor/Meetup leader, demonstrating his commitment to sharing knowledge and fostering growth in the tech community.

His passion for innovation shines through in his work, particularly in cutting-edge areas such as APIs, the Internet Of Things (IOT), Artificial Intelligence (AI) ecosystem, and Cybersecurity. Rajnish actively engages with audiences on platforms like Salesforce Dreamforce, World Tour, Podcasts, and other avenues, where he shares his insights and expertise to assist customers on their digital transformation journey.